Decoupled Architecture

We restructured their pipelines into a Directed Acyclic Graph (DAG) of independent steps, separating data ingestion, AI-agent extraction, and validation into distinct units.

Client: A rapidly scaling clinical data provider expanding

its real-world evidence (RWE) tracking.

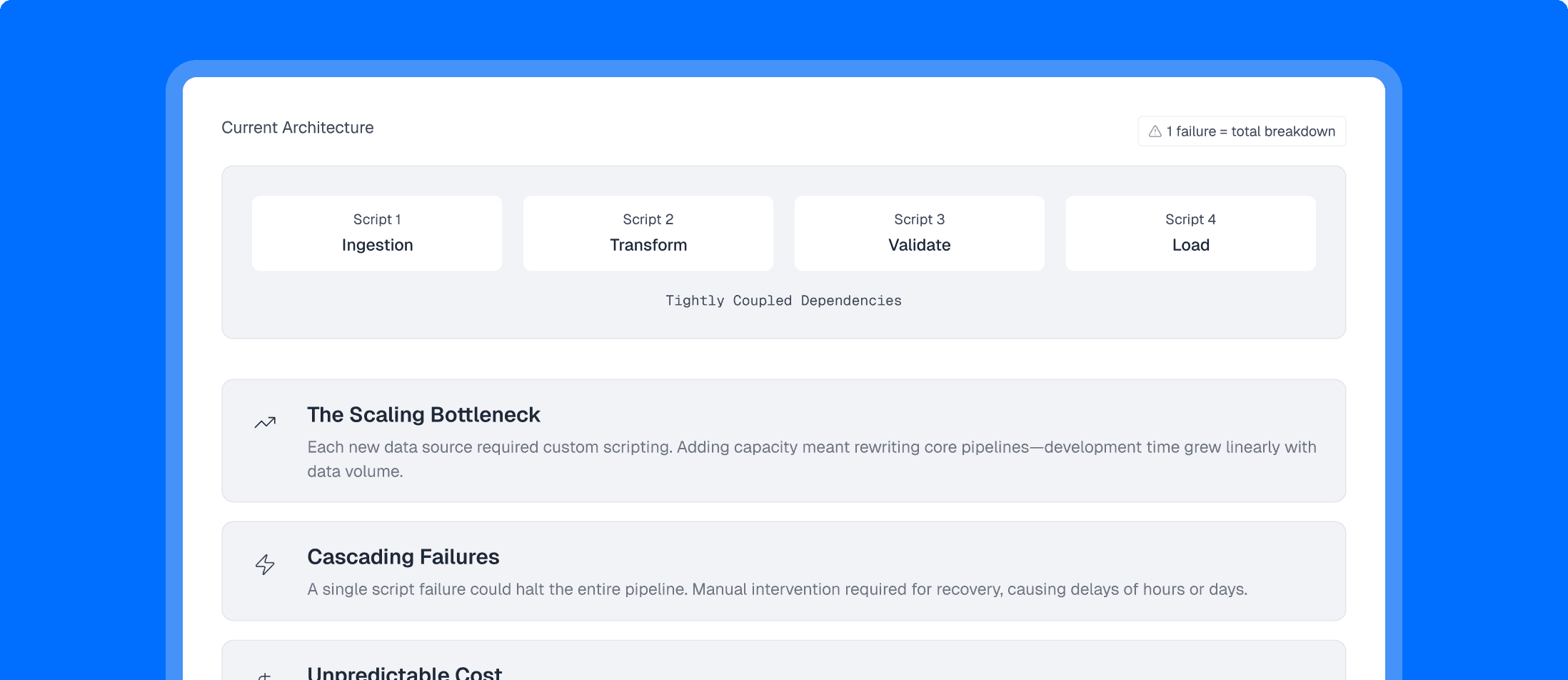

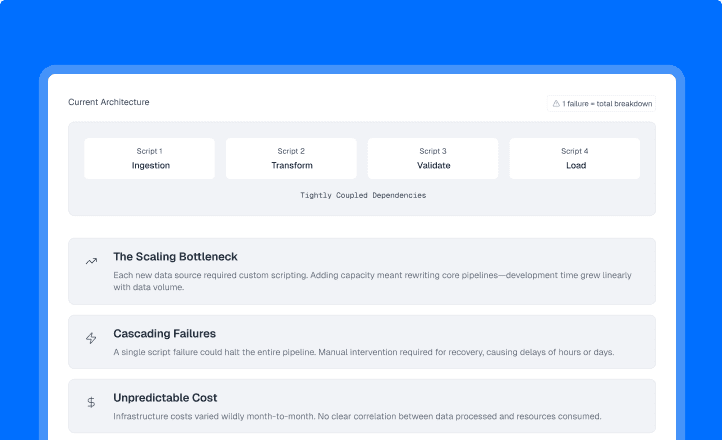

Managing increasing volumes of biomedical data quickly outpaced the client's initial infrastructure. Their data operations team was relying on "artisanal workflows"—custom-built, tightly coupled scripts that managed everything from ingestion to transformation.

Sogody deployed a 6-week "Pipeline Hardening" engagement to overhaul their infrastructure. We replaced the brittle scripts with a highly controlled, containerized execution engine built on AWS-native orchestration.

We restructured their pipelines into a Directed Acyclic Graph (DAG) of independent steps, separating data ingestion, AI-agent extraction, and validation into distinct units.

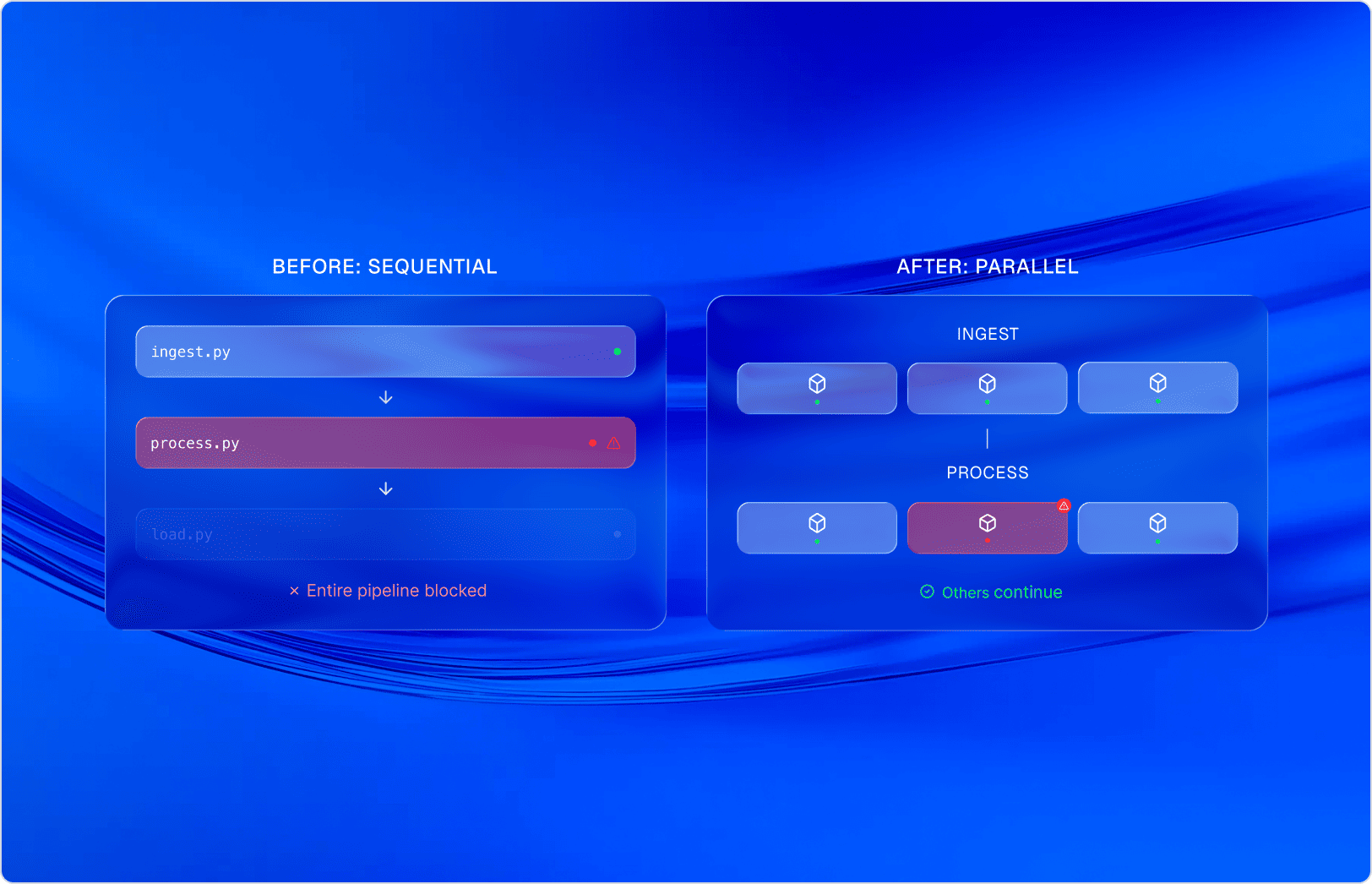

Each workflow step was isolated to run within its own container on AWS Batch. This enabled massive parallel processing, allowing multiple data domains to process simultaneously without resource contention.

By containerizing the execution units, we instituted strict fault isolation. If an extraction agent timed out, the failure was localized to that single container. The orchestrator automatically managed retries and routed anomalies for review without blocking the rest of the production line.

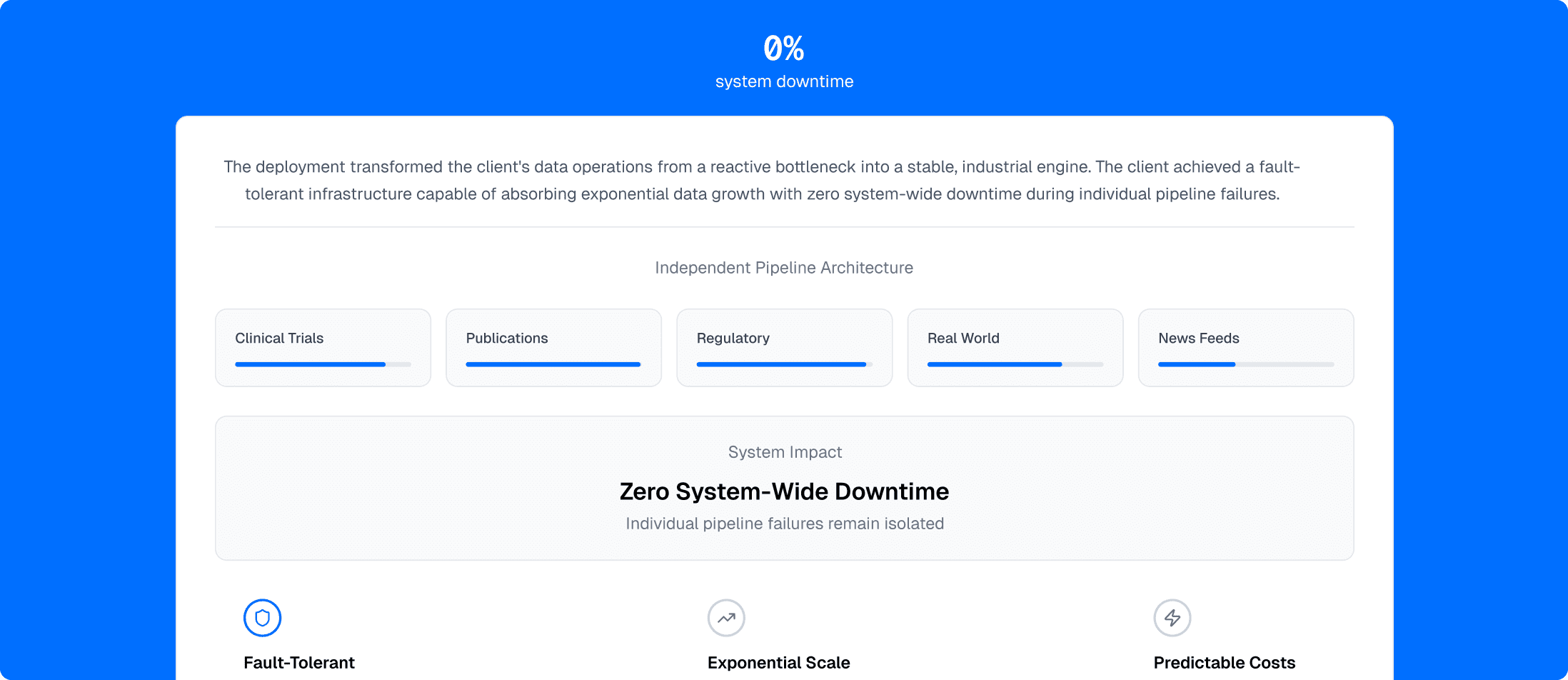

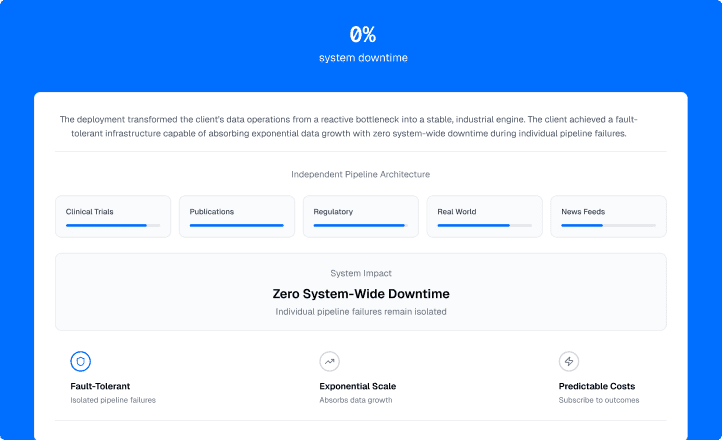

The deployment transformed the client's data operations from a reactive bottleneck into a stable, industrial engine. The client achieved a fault-tolerant infrastructure capable of absorbing exponential data growth with zero system-wide downtime during individual pipeline failures. They successfully transitioned from paying for ad-hoc consulting hours to fix broken scripts, to "subscribing to outcomes."